In March, we embarked on a quality improvement project at our nursery that has seen us implement CREC’s outstanding BEEL and EEL programme into our setting.

- BEEL is the ‘Baby Effective Early Learning’ programme which caters for 0-2 year-olds.

- The ‘Effective Early Learning’ (EEL) programme focuses on 3-5s.

- CREC is the Centre for Research in Early Childhood.

Both programmes are based on an observation method pioneered by Professor Ferre Laevers from Leuven University in Belgium. The Professor observed how young children regularly became absorbed in what they are doing and he believed that an involved child is gaining a deep, motivated, intense and long-term learning experience.

You can find out more about CREC and the BEEL/EEL programme on their website.

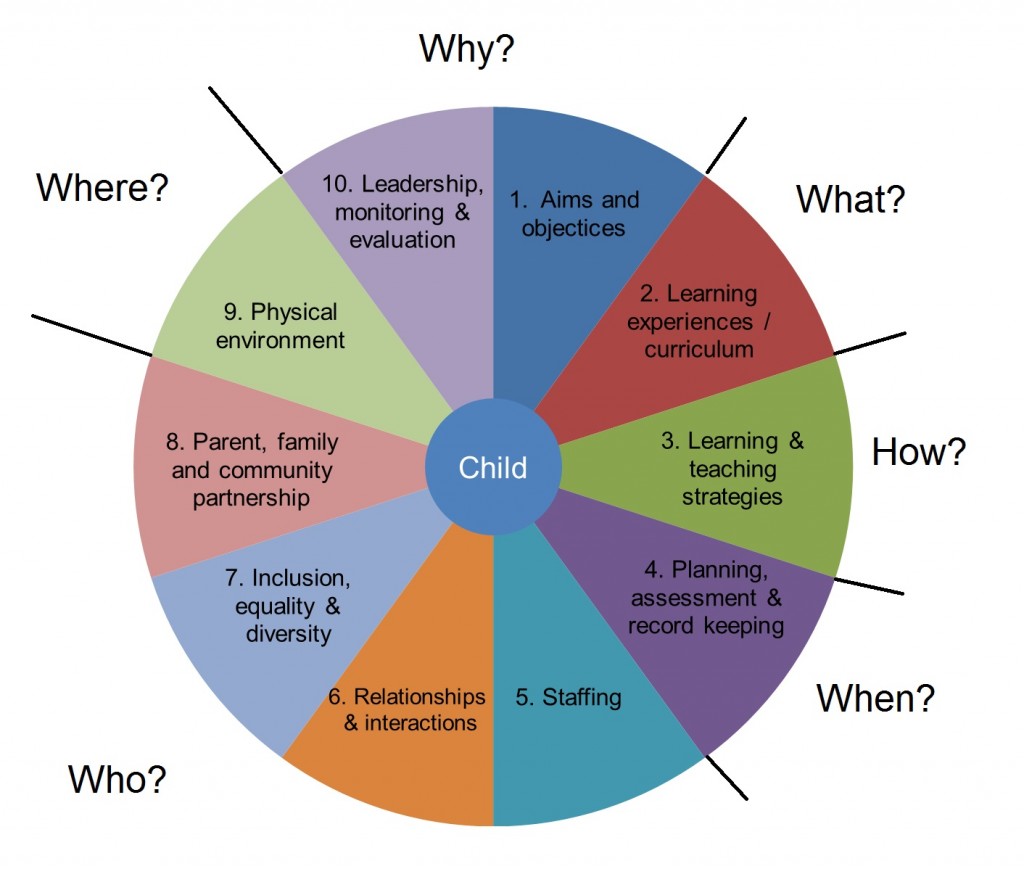

What sets BEEL/EEL apart from many other quality improvement programmes is that it takes a critical and holistic look at your setting in practice.

We firmly believe that theory (and software!) has to work in practice and we subscribe to the importance of holistic development – it is, in fact, one the core tenets of our philosophy. So, when we were looking to undertake our own quality improvement project, it became clear that BEEL/EEL had a natural fit.

To make sure we fully understood the process, we jumped in the car up to Birmingham and attended CREC’s excellent training course. The course involves two days of face-to-face training, but is designed to support you to execute a rigorous and in-depth self-evaluation of your setting. This process can take up to 12 months.

You don’t get a nice certificate and a pat on the back, but you do come away inspired…and with lots of homework!

With so much to do, we decided we had better get straight to work. So, on our return to London, we immediately set about implementing the programme with our goal to improve quality and enhance provision for all of our learners.

The homework – what did we have to do?

There’s no getting away from the fact that the BEEL and EEL programme required us to collect a lot of information about our practice. Fortunately, CREC sent us away with a number of tools and various data collection methods, including audits, interviews and observations – all of which would help us measure and monitor the effectiveness of practice.

It really is a holistic programme: we had to take a critical look at everything from policies to the environment to resources and more.

We first interviewed a selection of staff (at all levels) and parents about the effectiveness of our practice and asked for their opinions and ideas on how we could improve.

Next, we moved on to a key part of the project that required us to examine our practice in the classroom. This requires two separate sets of observations that look at the relationship between children and adults in the nursery in different ways.

1. Child tracking

‘Child tracking’ observations explore the interaction between the child and adult.

There are 13 categories of ‘interaction’ which examine who is leading an interaction/conversation and whether it is balanced between the adult, child or children. You also collect information on the grouping structure when an observation was made (small class, with an adult etc) and how much autonomy the child had at choosing an activity.

There is no right or wrong answer, but real value can be gained by exploring the results with your team.

Having said that, the data captured at this stage does indicate how well the EYFS curriculum is being covered in your setting.

2. Child / adult involvement

The next set of observations explore the involvement of the adult and the child in the learning experience.

The level of involvement is scored from 1-5 on a different set of scales based on the categories derived from Professor Laevers’ work.

Child involvement is judged under the categories of ‘levels of connectedness’, ‘meaning making’ and ‘exploration’.

Adult involvement is scored on the level of sensitivity, stimulation and autonomy.

The results from this set of observations immediately indicate apparent causes for concern, as well as positive elements of practice.

*

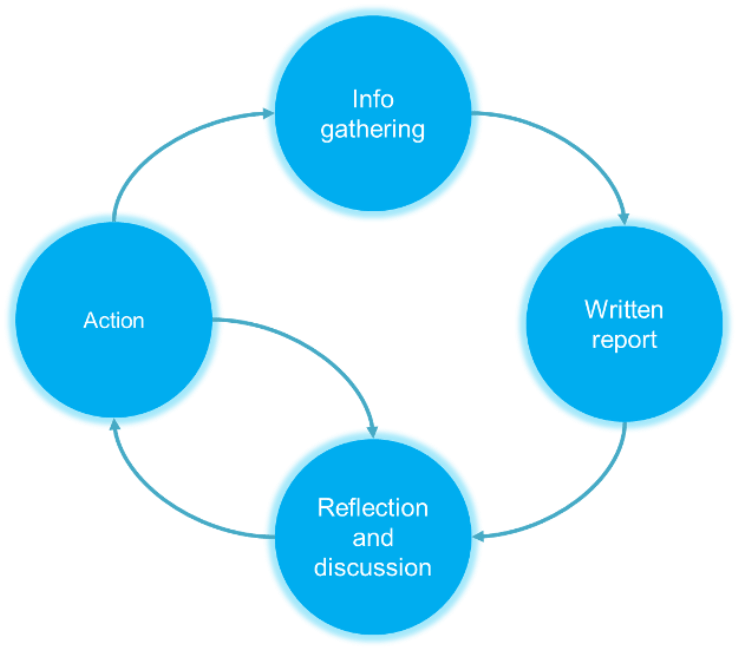

Once we had completed the research, we collated all of the information into a written report, using a template which helped us to summarise the findings. Because the programme covered every part of our practice, the findings in the report have really helped us to identify our strengths and weakness and, subsequently, to plan and deliver higher quality childcare. Equally important as the report (if not more) has been the discussion that the process has facilitated. In particular, it has encouraged us to decide on our next steps as a team.

The outcome: first improvement cycle

We are now at our first improvement cycle and, although we have a long way to go to realise the full benefits, the process itself has already had a positive impact and we’ve learned so much.

Identifying improvements

Like most people who work in childcare, we strive to make our setting an exceptional place in which children can thrive, develop and learn. That requires regular reflection. However, it can be hard to look at your own setting objectively.

BEEL/EEL has helped us to look at our setting objectively. It has helped us to ask the right questions and to drill right down into areas of practice.

The data collection tools have been an invaluable resource and, through using them, we’ve discovered areas for improvement that we would never have been able to identify. For example, we found an interesting correlation between adult stimulation and a child’s autonomy on the level of child engagement. Although our team scored highly for sensitivity, we discovered that this had less of a bearing on how engaged a child was with an activity. Feeling cared for and supported does is essential for a child, but it does not necessarily lead to them being stimulated. Sensitivity needs to be combined with the other elements to make the most difference. This has therefore become a key area for development of our staff.

The results have been eye-opening, even for experienced professionals and for those who spend most of their time in the classroom.

It’s been great to have the new insight, to have our practice challenged (it’s important to be kept on your toes!) and, in some cases, to have instinct backed up by evidence. Ultimately, as a result of undertaking the project, we’re better able to understand what we need to do to improve.

Engagement with parents

The project has reminded us that it’s vital to engage with parents in a critical way. It encouraged us to take the opportunity to sit down one-to-one with parents to simply talk about their views, rather than just their child. It was great to hear their ideas and opinions and a reminder that group coffee mornings aren’t the only place to get more general feedback.

Empowering people

Perhaps the most important outcome so far is that the whole process has been empowering for our practitioners. They have been involved every step of the way and have had the opportunity to decide what happens next. If you want to effect positive change, you need to take people with you. That’s much easier to do when your team feel that they have an influence over the outcome.

Where to next?

We’re now in the process of taking the first steps towards improved practice. A few months down the line, we’ll review where we are and see the progress we’ve made.

I’m pretty confident that we’re heading in the right direction, but I’m aware there is a long way to go. The methodology seems sound and it’s now up to us to follow through with our plans and to deliver what Professors Pascal and Bertram envisage.

We have seen children, practitioners and parents from all types of communities and settings become stronger, more articulate and more skilled in their approach to learning, and also more discerning about what works to ensure our youngest children achieve their full potential.

It’s a programme which has the ability to change lives and give everyone a stake in creating their futures.”

CREC Directors, Professor Chris Pascal OBE and Professor Tony Bertram

Valuable takeaways

Quality improvement projects require a lot of work, but I would always argue their benefit. If you cannot understand how your setting is working and how you can make it better, it will remain stagnant. Our practice is the bedrock of all that our nursery is built upon. If we spend the time investing in it, the return will overspill into each aspect of our business.

Time to improve quality is never wasted time.

If you’re not quite ready for the training from CREC, you can still take steps towards those goals by putting in place some of the valuable lessons we have learned so far.

1. Talk to your parents and your staff!

It’s surprising how much can come out of a 15-minute conversation. Parents were more than happy to discuss their ideas with practitioners and some made valuable comments that would never have been expressed otherwise. When we meet with parents it is often to simply discuss their child, not their views. Setting aside time to do this will provide a valuable insight into how a parent sees your setting. Questionnaires are helpful, but one-on-one time allows for more elaboration and further questions.

The same applies for your staff. Give them the time and space to express their opinions regarding practice opposed to their performance. As a manager, seeing what the nursery means to them may alter the way you conduct practice.

2. Empower your practitioners in the process

Projects alone will not support improvement. The more your practitioners get ‘on-board’ with the project, the better the results will be and the more willing your team will be to support in the collection of data and to alter their practice. Allow your practitioners to feel that the project is as much theirs as it is yours. Listening to their views and gathering their ideas is a starting point. Simply dictating changes is demotivating. Involve and empower your team!

3. Whole setting reflection is essential

One of the most valuable parts of the project so far has been having group discussion and reflection about the end results. We made time to sit down as a team, we analysed, we discussed, we compared and we planned. Everyone had plenty of fresh insights to offer.

Top tip

One way to involve practitioners in next steps is to write down each time a ‘point for improvement’ is raised on a post-it. Place each post-it on a large board or wall. At the end of your session, ask practitioners to choose their top three ‘points for improvement’. The three with the most votes can be made into a formal action plan. Keep all of the ideas to work on at a later date.

4. Learn to love data

The thought of doing anything maths-related may fill you with dread. Yet, data can help you look at your practice from a different angle. The BEEL/EEL programme scores what could be seen as subjective measures, such as child involvement or adult stimulation, via an objective scale. This makes it easier to analyse and compare one child or adult to another and helps you to better understand support or training requirements.

Without data we guess, but with data we can pinpoint with accuracy and that means we can plan more effectively for every child.

Don’t forget that it’s equally important to celebrate good practice and the data you collect can help demonstrate your teams strengths.

*

I hope this helps inspire you to put your own quality improvement project into place – however big or small. Let me know how you get on!

Heather Stallard

Latest posts by Heather Stallard (see all)

- Vision, values & clarity - 7 January 2016

- Parental Partnerships – the conversations we’re not having - 10 November 2015

- Quality improvement in process: adopting BEEL and EEL - 13 October 2015

Recent Comments